|

OpenCV

4.1.0

Open Source Computer Vision

|

|

OpenCV

4.1.0

Open Source Computer Vision

|

Today it is common to have a digital video recording system at your disposal. Therefore, you will eventually come to the situation that you no longer process a batch of images, but video streams. These may be of two kinds: real-time image feed (in the case of a webcam) or prerecorded and hard disk drive stored files. Luckily OpenCV treats these two in the same manner, with the same C++ class. So here's what you'll learn in this tutorial:

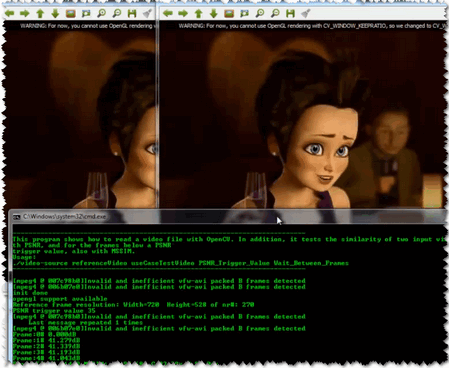

As a test case where to show off these using OpenCV I've created a small program that reads in two video files and performs a similarity check between them. This is something you could use to check just how well a new video compressing algorithms works. Let there be a reference (original) video like this small Megamind clip and a compressed version of it. You may also find the source code and these video file in the samples/data folder of the OpenCV source library.

Essentially, all the functionalities required for video manipulation is integrated in the cv::VideoCapture C++ class. This on itself builds on the FFmpeg open source library. This is a basic dependency of OpenCV so you shouldn't need to worry about this. A video is composed of a succession of images, we refer to these in the literature as frames. In case of a video file there is a frame rate specifying just how long is between two frames. While for the video cameras usually there is a limit of just how many frames they can digitize per second, this property is less important as at any time the camera sees the current snapshot of the world.

The first task you need to do is to assign to a cv::VideoCapture class its source. You can do this either via the cv::VideoCapture::VideoCapture or its cv::VideoCapture::open function. If this argument is an integer then you will bind the class to a camera, a device. The number passed here is the ID of the device, assigned by the operating system. If you have a single camera attached to your system its ID will probably be zero and further ones increasing from there. If the parameter passed to these is a string it will refer to a video file, and the string points to the location and name of the file. For example, to the upper source code a valid command line is:

We do a similarity check. This requires a reference and a test case video file. The first two arguments refer to this. Here we use a relative address. This means that the application will look into its current working directory and open the video folder and try to find inside this the Megamind.avi and the Megamind_bug.avi.

To check if the binding of the class to a video source was successful or not use the cv::VideoCapture::isOpened function:

Closing the video is automatic when the objects destructor is called. However, if you want to close it before this you need to call its cv::VideoCapture::release function. The frames of the video are just simple images. Therefore, we just need to extract them from the cv::VideoCapture object and put them inside a Mat one. The video streams are sequential. You may get the frames one after another by the cv::VideoCapture::read or the overloaded >> operator:

The upper read operations will leave empty the Mat objects if no frame could be acquired (either cause the video stream was closed or you got to the end of the video file). We can check this with a simple if:

A read method is made of a frame grab and a decoding applied on that. You may call explicitly these two by using the cv::VideoCapture::grab and then the cv::VideoCapture::retrieve functions.

Videos have many-many information attached to them besides the content of the frames. These are usually numbers, however in some case it may be short character sequences (4 bytes or less). Due to this to acquire these information there is a general function named cv::VideoCapture::get that returns double values containing these properties. Use bitwise operations to decode the characters from a double type and conversions where valid values are only integers. Its single argument is the ID of the queried property. For example, here we get the size of the frames in the reference and test case video file; plus the number of frames inside the reference.

When you are working with videos you may often want to control these values yourself. To do this there is a cv::VideoCapture::set function. Its first argument remains the name of the property you want to change and there is a second of double type containing the value to be set. It will return true if it succeeds and false otherwise. Good examples for this is seeking in a video file to a given time or frame:

For properties you can read and change look into the documentation of the cv::VideoCapture::get and cv::VideoCapture::set functions.

We want to check just how imperceptible our video converting operation went, therefore we need a system to check frame by frame the similarity or differences. The most common algorithm used for this is the PSNR (aka Peak signal-to-noise ratio). The simplest definition of this starts out from the mean squad error. Let there be two images: I1 and I2; with a two dimensional size i and j, composed of c number of channels.

\[MSE = \frac{1}{c*i*j} \sum{(I_1-I_2)^2}\]

Then the PSNR is expressed as:

\[PSNR = 10 \cdot \log_{10} \left( \frac{MAX_I^2}{MSE} \right)\]

Here the \(MAX_I\) is the maximum valid value for a pixel. In case of the simple single byte image per pixel per channel this is 255. When two images are the same the MSE will give zero, resulting in an invalid divide by zero operation in the PSNR formula. In this case the PSNR is undefined and as we'll need to handle this case separately. The transition to a logarithmic scale is made because the pixel values have a very wide dynamic range. All this translated to OpenCV and a C++ function looks like:

Typically result values are anywhere between 30 and 50 for video compression, where higher is better. If the images significantly differ you'll get much lower ones like 15 and so. This similarity check is easy and fast to calculate, however in practice it may turn out somewhat inconsistent with human eye perception. The structural similarity algorithm aims to correct this.

Describing the methods goes well beyond the purpose of this tutorial. For that I invite you to read the article introducing it. Nevertheless, you can get a good image of it by looking at the OpenCV implementation below.

This will return a similarity index for each channel of the image. This value is between zero and one, where one corresponds to perfect fit. Unfortunately, the many Gaussian blurring is quite costly, so while the PSNR may work in a real time like environment (24 frame per second) this will take significantly more than to accomplish similar performance results.

Therefore, the source code presented at the start of the tutorial will perform the PSNR measurement for each frame, and the SSIM only for the frames where the PSNR falls below an input value. For visualization purpose we show both images in an OpenCV window and print the PSNR and MSSIM values to the console. Expect to see something like:

You may observe a runtime instance of this on the YouTube here.

[block] 1.8.3

1.8.3