Non Linear Methods for Regression¶

Kernel Ridge Regression¶

- class mlpy.KernelRidge(lmb=1.0, kernel=None)¶

Kernel Ridge Regression (dual).

Initialization.

Parameters : - lmb : float (>= 0.0)

regularization parameter

- kernel : None or mlpy.Kernel object.

if kernel is None, K and Kt in .learn() and in .pred() methods must be precomputed kernel matricies, else K and Kt must be training (resp. test) data in input space.

- alpha()¶

Return alpha.

- b()¶

Return b.

- learn(K, y)¶

Compute the regression coefficients.

- Parameters:

- K: 2d array_like object

- precomputed training kernel matrix (if kernel=None); training data in input space (if kernel is a Kernel object)

- y : 1d array_like object (N)

- target values

- pred(Kt)¶

Compute the predicted response.

Parameters : - Kt : 1d or 2d array_like object

precomputed test kernel matrix. (if kernel=None); test data in input space (if kernel is a Kernel object).

Returns : - p : integer or 1d numpy darray

predicted response

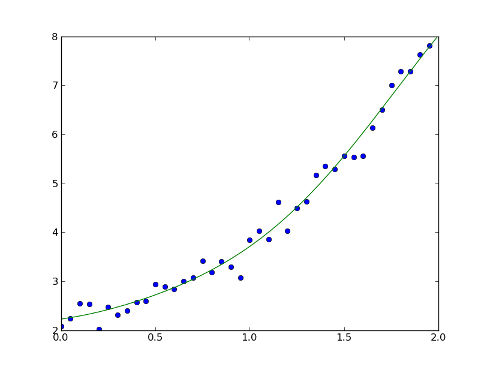

Example:

>>> import numpy as np

>>> import matplotlib.pyplot as plt

>>> import mlpy

>>> np.random.seed(0)

>>> x = np.arange(0, 2, 0.05).reshape(-1, 1) # training points

>>> y = np.ravel(np.exp(x)) + np.random.normal(1, 0.2, x.shape[0]) # target values

>>> xt = np.arange(0, 2, 0.01).reshape(-1, 1) # testing points

>>> K = mlpy.kernel_gaussian(x, x, sigma=1) # training kernel matrix

>>> Kt = mlpy.kernel_gaussian(xt, x, sigma=1) # testing kernel matrix

>>> krr = KernelRidge(lmb=0.01)

>>> krr.learn(K, y)

>>> yt = krr.pred(Kt)

>>> fig = plt.figure(1)

>>> plot1 = plt.plot(x[:, 0], y, 'o')

>>> plot2 = plt.plot(xt[:, 0], yt)

>>> plt.show()